Difference between revisions of "JAR v.IS Project Findings"

Albertb.2013 (talk | contribs) |

|||

| (9 intermediate revisions by 2 users not shown) | |||

| Line 117: | Line 117: | ||

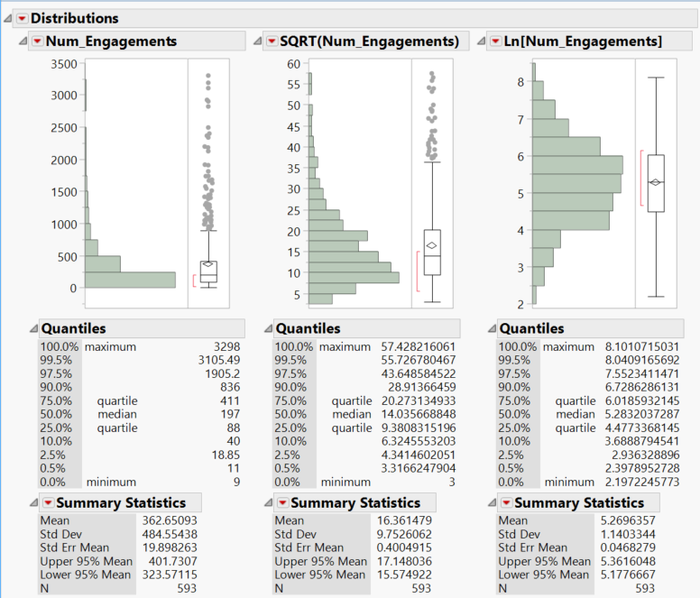

<p>We perform the transformation on the variables to make them more suitable for regression analysis. We perform a square root transformation as well as a natural logarithm transformation on all response and explanatory variables whose distributions are not normal to reduce skewness and yield a more normal distribution.</p><br> | <p>We perform the transformation on the variables to make them more suitable for regression analysis. We perform a square root transformation as well as a natural logarithm transformation on all response and explanatory variables whose distributions are not normal to reduce skewness and yield a more normal distribution.</p><br> | ||

| − | [[File:Article_transformation.png|center| | + | [[File:Article_transformation.png|700px|center]] |

| + | {|style="width:100%;vertical-align:top;margin-top:20px;" | ||

| + | |- | ||

| + | |style="vertical-align:top;width:30%;" | <div style="background: #ffffff; text-align:center; line-height: wrap_content; text-align: center;font-size:12px">Transforming the Response Variables and removing the outliers</div> | ||

| + | |||

<br> | <br> | ||

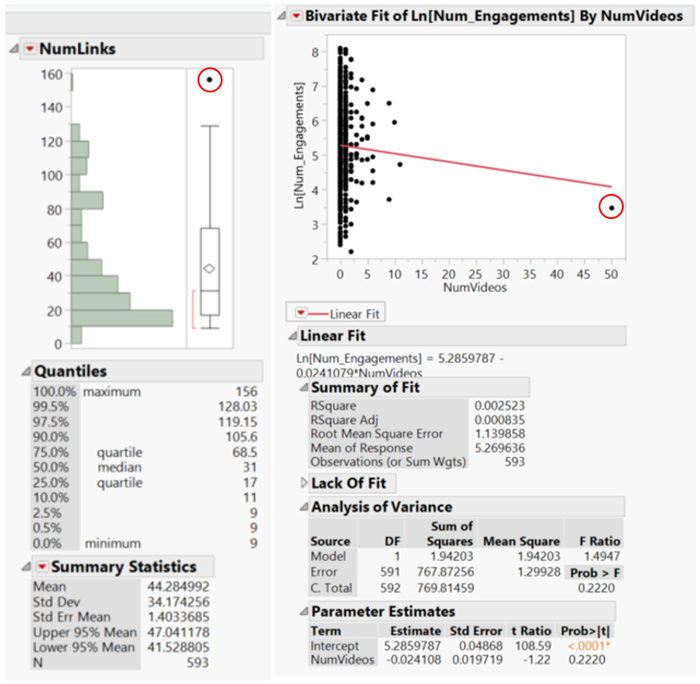

<p>The outliers for the explanatory variables are judged by the independent variable distributions as well as the scatterplots of the response variable against the explanatory variables. We remove the following data points (as circled in the figure) as outliers. </p><br> | <p>The outliers for the explanatory variables are judged by the independent variable distributions as well as the scatterplots of the response variable against the explanatory variables. We remove the following data points (as circled in the figure) as outliers. </p><br> | ||

| − | [[File:outlier.png|center| | + | |

| + | [[File:outlier.png|700px|center]] | ||

| + | {|style="width:100%;vertical-align:top;margin-top:20px;" | ||

| + | |- | ||

| + | |style="vertical-align:top;width:30%;" | <div style="background: #ffffff; text-align:center; line-height: wrap_content; text-align: center;font-size:12px">Transforming the Explanatory Variables and removing the outliers</div> | ||

| + | |||

<br> | <br> | ||

| Line 130: | Line 139: | ||

</p> | </p> | ||

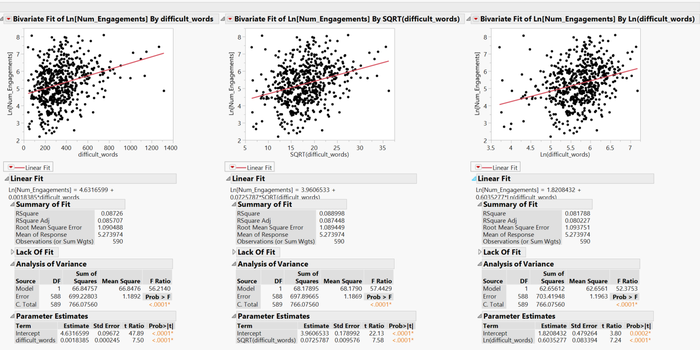

| − | + | [[File:Bivfit.png|700px|center]] | |

| − | [[File:Bivfit.png|center| | + | {|style="width:100%;vertical-align:top;margin-top:20px;" |

| + | |- | ||

| + | |style="vertical-align:top;width:30%;" | <div style="background: #ffffff; text-align:center; line-height: wrap_content; text-align: center;font-size:12px">Bivariate fit of difficult words count. we select the SQRT transformation instead of the Ln transformation</div> | ||

<p> | <p> | ||

| Line 144: | Line 155: | ||

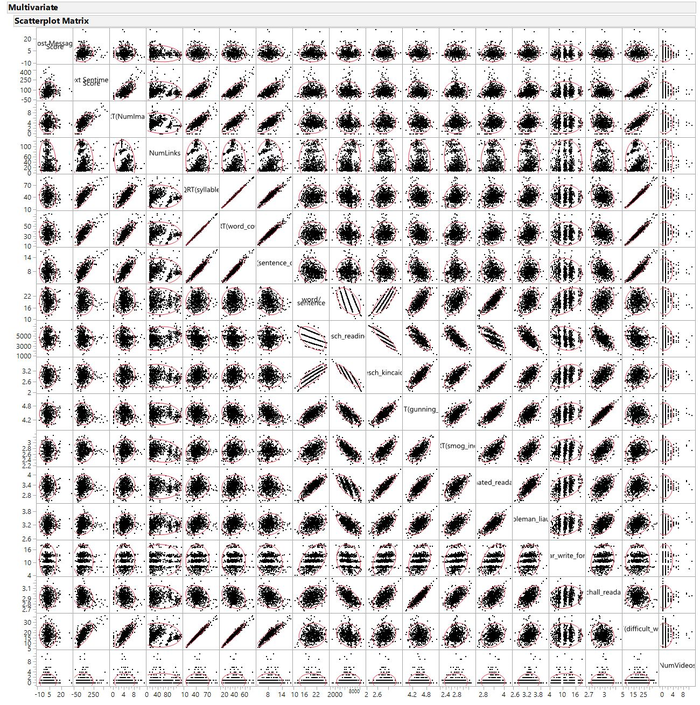

<p>We also ran bivariate fit against all the 18 numerical explanatory variables to test for multicollinearity. The figure below shows the bivariate correlation scatterplot.</p> | <p>We also ran bivariate fit against all the 18 numerical explanatory variables to test for multicollinearity. The figure below shows the bivariate correlation scatterplot.</p> | ||

| − | [[File:Bivfitscattermatrix.png|center| | + | [[File:Bivfitscattermatrix.png|700px|center]] |

| + | {|style="width:100%;vertical-align:top;margin-top:20px;" | ||

| + | |- | ||

| + | |style="vertical-align:top;width:30%;" | <div style="background: #ffffff; text-align:center; line-height: wrap_content; text-align: center;font-size:12px">Bivariate correlation scatterplot matrix for all 18 numerical variables for the article model</div> | ||

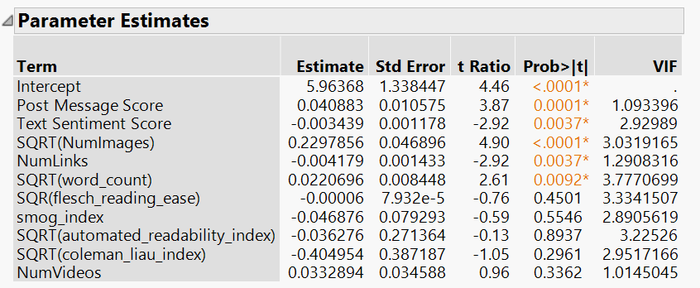

<p>Using this scatterplot together with the bivariate correlation matrix, we eliminated 8 variables that are highly correlated. We ran Standard Least Squares regression on continuous numerical variables to verify the absence of multicollinearity in our remaining variables.</p> | <p>Using this scatterplot together with the bivariate correlation matrix, we eliminated 8 variables that are highly correlated. We ran Standard Least Squares regression on continuous numerical variables to verify the absence of multicollinearity in our remaining variables.</p> | ||

| − | [[File:vifparamest.png|center| | + | |

| + | [[File:vifparamest.png|700px|center]] | ||

| + | {|style="width:100%;vertical-align:top;margin-top:20px;" | ||

| + | |- | ||

| + | |style="vertical-align:top;width:30%;" | <div style="background: #ffffff; text-align:center; line-height: wrap_content; text-align: center;font-size:12px">Parameter Estimates with VIF statistics</div> | ||

| + | |||

<p>As a result, we have the narrowed down version of our final list of numerical continuous explanatory variables to explain the variation of our response variables for the article regression model in preparation for the next step which is the stepwise regression.</p> | <p>As a result, we have the narrowed down version of our final list of numerical continuous explanatory variables to explain the variation of our response variables for the article regression model in preparation for the next step which is the stepwise regression.</p> | ||

| Line 158: | Line 177: | ||

<p>We proceed with the creation of our explanatory model by running stepwise regression within the Fit Model platform on JMP Pro 13 on the variables filtered from the steps above with the inclusion of categorical variables (that will be dummy coded by JMP). We conduct a p-value threshold regression at 5% which gives the best R<sup>2</sup> and adjusted R<sup>2</sup> values, indicating the best model fit given the available data. We ran the regression for the forward, backward and mixed directions and realised that the R<sup>2</sup> values for the three different directions are the same. We then select the mixed direction to run our model with. AICC and BICC measures are not used since we are looking at an explanatory model instead of a predictive model.</p> | <p>We proceed with the creation of our explanatory model by running stepwise regression within the Fit Model platform on JMP Pro 13 on the variables filtered from the steps above with the inclusion of categorical variables (that will be dummy coded by JMP). We conduct a p-value threshold regression at 5% which gives the best R<sup>2</sup> and adjusted R<sup>2</sup> values, indicating the best model fit given the available data. We ran the regression for the forward, backward and mixed directions and realised that the R<sup>2</sup> values for the three different directions are the same. We then select the mixed direction to run our model with. AICC and BICC measures are not used since we are looking at an explanatory model instead of a predictive model.</p> | ||

<br> | <br> | ||

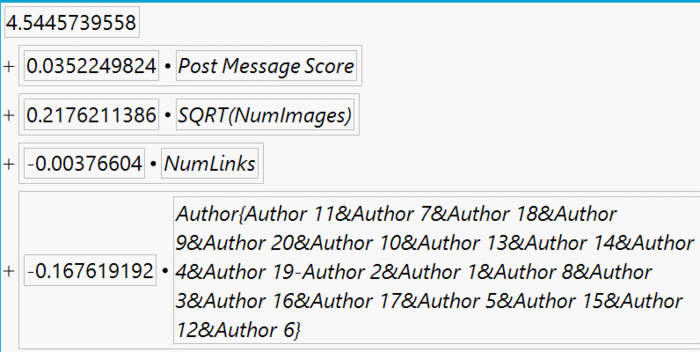

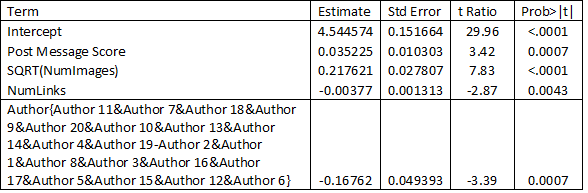

| + | <p>The regression equation and parameter estimates are shown below:</p> | ||

| − | [[File:artregeqn.png|center| | + | [[File:artregeqn.png|700px|center]] |

| + | {|style="width:100%;vertical-align:top;margin-top:20px;" | ||

| + | |- | ||

| + | |style="vertical-align:top;width:30%;" | <div style="background: #ffffff; text-align:center; line-height: wrap_content; text-align: center;font-size:12px">Article Regression equation for Ln(Total engagement)</div> | ||

| + | |||

| + | [[File:artparam.png|700px|center]] | ||

| + | {|style="width:100%;vertical-align:top;margin-top:20px;" | ||

| + | |- | ||

| + | |style="vertical-align:top;width:30%;" | <div style="background: #ffffff; text-align:center; line-height: wrap_content; text-align: center;font-size:12px">Article Regression Parameter Estimates for Ln(Total engagement)</div> | ||

{| style="width:100%; vertical-align:top; margin-top:5px;" | {| style="width:100%; vertical-align:top; margin-top:5px;" | ||

|- | |- | ||

| − | | style="vertical-align:top;width:20%;" | <div style="none: solid; border-width:2px; background: #FFFFFF; padding: 10px; font-weight:bold; text-align:center; line-height: wrap_content; text-indent: 20px; font-size:18px"><font color="#b1260e" size=5 face="Century Gothic"> | + | | style="vertical-align:top;width:20%;" | <div style="none: solid; border-width:2px; background: #FFFFFF; padding: 10px; font-weight:bold; text-align:center; line-height: wrap_content; text-indent: 20px; font-size:18px"><font color="#b1260e" size=5 face="Century Gothic">Model Fit and Model Assumptions</font></div><br/> |

| + | [[File:artmodfit.png|700px|center]] | ||

| + | {|style="width:100%;vertical-align:top;margin-top:20px;" | ||

| + | |- | ||

| + | |style="vertical-align:top;width:30%;" | <div style="background: #ffffff; text-align:center; line-height: wrap_content; text-align: center;font-size:12px">Article Regression Model Fit</div> | ||

| + | <p> | ||

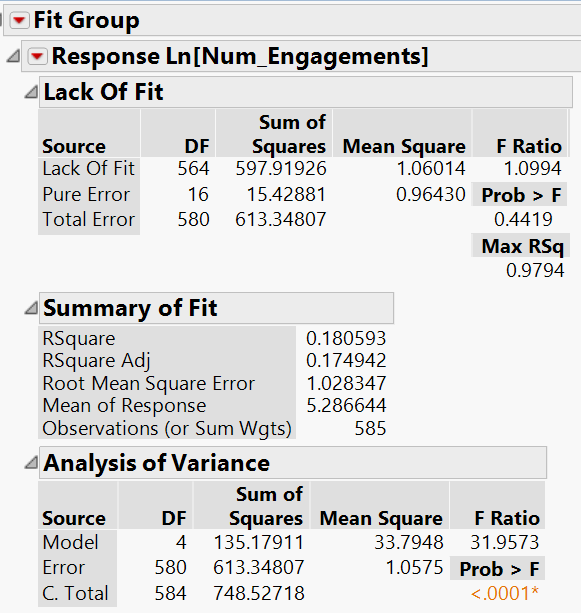

| + | The goodness of fit is represented by the R<sup>2</sup> value. R<sup>2</sup> is a statistical measure known as the coefficient of determination which measures how close data points are to the line generated by the model. | ||

| + | |||

| + | The R<sup>2</sup> value here for the articles model is 0.18 and represents that the variation in Ln Total Engagement for articles is 18% explained by the model. | ||

| + | <br><br> | ||

| + | To gauge the explanatory power of each additional explanatory variable added, we also consider the adjusted R<sup>2</sup> value, which adjusts for the number of explanatory variables in the model – that is, it would only increase if each explanatory variable added improves the model more than what is expected by chance. | ||

| + | |||

| + | The adjusted R<sup>2</sup> value here for the articles model is 0.17 and represents that the variation in Ln Total Engagement for articles is 17% explained by those explanatory variables that affect the response variable. | ||

| + | |||

| + | </p><br> | ||

| + | |||

| + | <p>We then move on to the model assumptions to validate our regression model findings. There are several assumptions of linear regression models which need to be met, as seen below: | ||

| + | * Relationship between the dependent variable and independent variables is linear | ||

| + | * Expected mean error of the regression model is zero | ||

| + | * Errors/Residuals have constant variance (Homoscedastic) | ||

| + | * Errors/Residuals are independent of each other | ||

| + | * Errors/Residuals are normally distributed and have a population mean of zero | ||

| + | </p> | ||

| + | {| style="width:100%; vertical-align:top; margin-top:5px;" | ||

| + | |- | ||

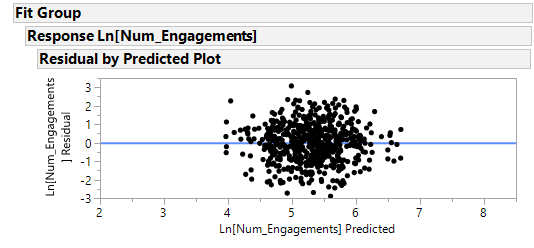

| + | | style="vertical-align:top;width:20%;" | <div style="none: solid; border-width:2px; background: #FFFFFF; padding: 10px; font-weight:bold; text-align:center; line-height: wrap_content; text-indent: 20px; font-size:18px"><font color="#b1260e" size=3 face="Century Gothic">Assumption 1: Linearity</font></div><br/> | ||

| + | |||

| + | [[File:Assumption_1n3.png|700px|center]] | ||

| + | {|style="width:100%;vertical-align:top;margin-top:20px;" | ||

| + | |- | ||

| + | |style="vertical-align:top;width:30%;" | <div style="background: #ffffff; text-align:center; line-height: wrap_content; text-align: center;font-size:12px">Residual by predicted plot</div> | ||

| + | |||

| + | <p>The points are quite symmetrically distributed around the line, and this indicates that the points are random and hence fulfills the linearity assumption.</p> | ||

{| style="width:100%; vertical-align:top; margin-top:5px;" | {| style="width:100%; vertical-align:top; margin-top:5px;" | ||

|- | |- | ||

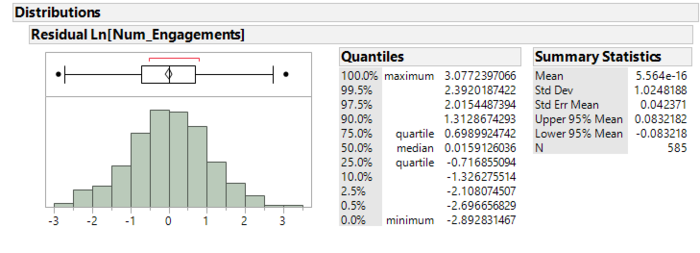

| − | | style="vertical-align:top;width:20%;" | <div style="none: solid; border-width:2px; background: #FFFFFF; padding: 10px; font-weight:bold; text-align:center; line-height: wrap_content; text-indent: 20px; font-size:18px"><font color="#b1260e" size=5 face="Century Gothic"> | + | | style="vertical-align:top;width:20%;" | <div style="none: solid; border-width:2px; background: #FFFFFF; padding: 10px; font-weight:bold; text-align:center; line-height: wrap_content; text-indent: 20px; font-size:18px"><font color="#b1260e" size=3 face="Century Gothic">Assumption 2: Zero expected mean error</font></div><br/> |

| + | |||

| + | [[File:Assumption_2.png|700px|center]] | ||

| + | {|style="width:100%;vertical-align:top;margin-top:20px;" | ||

| + | |- | ||

| + | |style="vertical-align:top;width:30%;" | <div style="background: #ffffff; text-align:center; line-height: wrap_content; text-align: center;font-size:12px">Distribution of residuals</div> | ||

| + | |||

| + | <p>The residuals largely follow a normal distribution with a mean close to zero and a standard deviation close to one.</p> | ||

| + | |||

| + | {| style="width:100%; vertical-align:top; margin-top:5px;" | ||

| + | |- | ||

| + | | style="vertical-align:top;width:20%;" | <div style="none: solid; border-width:2px; background: #FFFFFF; padding: 10px; font-weight:bold; text-align:center; line-height: wrap_content; text-indent: 20px; font-size:18px"><font color="#b1260e" size=3 face="Century Gothic">Assumption 3: Homoscedasticity</font></div><br/> | ||

| + | [[File:Assumption_1n3.png|700px|center]] | ||

| + | {|style="width:100%;vertical-align:top;margin-top:20px;" | ||

| + | |- | ||

| + | |style="vertical-align:top;width:30%;" | <div style="background: #ffffff; text-align:center; line-height: wrap_content; text-align: center;font-size:12px">Residual by predicted plot</div> | ||

| + | |||

| + | <p>The distribution of the points in the plot is rather symmetrical, with no signs of increasing residuals with the increase of the predicted values (it is not funnel shaped). This indicates that the residuals have constant variance and are hence homoscedastic</p> | ||

| + | |||

| + | {| style="width:100%; vertical-align:top; margin-top:5px;" | ||

| + | |- | ||

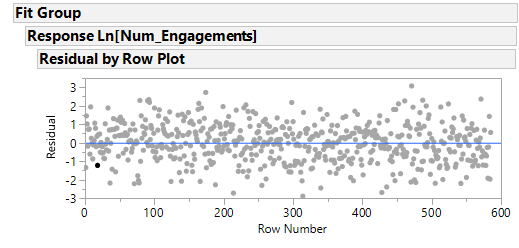

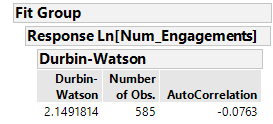

| + | | style="vertical-align:top;width:20%;" | <div style="none: solid; border-width:2px; background: #FFFFFF; padding: 10px; font-weight:bold; text-align:center; line-height: wrap_content; text-indent: 20px; font-size:18px"><font color="#b1260e" size=3 face="Century Gothic">Assumption 4: Independent Residuals</font></div><br/> | ||

| + | |||

| + | [[File:Assumption_4a.png|700px|center]] | ||

| + | {|style="width:100%;vertical-align:top;margin-top:20px;" | ||

| + | |- | ||

| + | |style="vertical-align:top;width:30%;" | <div style="background: #ffffff; text-align:center; line-height: wrap_content; text-align: center;font-size:12px">Residual by row plot</div> | ||

| + | |||

| + | <p>The scatter plot shows that the residuals are randomly distributed around the line and hence shows that they are time independent. This also suggests that residuals are not autocorrelated. | ||

| + | </p> | ||

| + | |||

| + | [[File:Assumption_4b.png|400px|center]] | ||

| + | {|style="width:100%;vertical-align:top;margin-top:20px;" | ||

| + | |- | ||

| + | |style="vertical-align:top;width:30%;" | <div style="background: #ffffff; text-align:center; line-height: wrap_content; text-align: center;font-size:12px">Durbin-Watson test of no autocorrelation</div> | ||

| + | |||

| + | <p>The Durbin-Watson d = 2.15, which is between the two critical values of 1.5 < d < 2.5. Therefore, we can assume that there is no first order linear auto-correlation in our multiple linear regression data</p> | ||

| + | |||

| + | {| style="width:100%; vertical-align:top; margin-top:5px;" | ||

| + | |- | ||

| + | | style="vertical-align:top;width:20%;" | <div style="none: solid; border-width:2px; background: #FFFFFF; padding: 10px; font-weight:bold; text-align:center; line-height: wrap_content; text-indent: 20px; font-size:18px"><font color="#b1260e" size=3 face="Century Gothic">Assumption 5: Residuals are normally distributed</font></div><br/> | ||

| + | |||

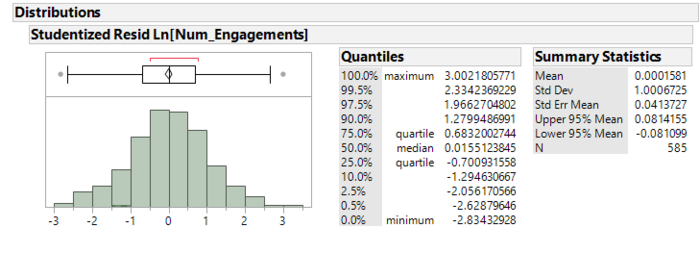

| + | [[File:Assumption_5.png|700px|center]] | ||

| + | {|style="width:100%;vertical-align:top;margin-top:20px;" | ||

| + | |- | ||

| + | |style="vertical-align:top;width:30%;" | <div style="background: #ffffff; text-align:center; line-height: wrap_content; text-align: center;font-size:12px">Studentized Residual distribution</div> | ||

| + | |||

| + | <p>The residuals largely follow a normal distribution with a mean close to zero and a standard deviation close to one, hence fulfilling this assumption.</p> | ||

| + | |||

{| style="width:100%; vertical-align:top; margin-top:5px;" | {| style="width:100%; vertical-align:top; margin-top:5px;" | ||

|- | |- | ||

| style="vertical-align:top;width:20%;" | <div style="none: solid; border-width:2px; background: #FFFFFF; padding: 10px; font-weight:bold; text-align:center; line-height: wrap_content; text-indent: 20px; font-size:18px"><font color="#b1260e" size=5 face="Century Gothic">Interpretation and Managerial insights</font></div><br/> | | style="vertical-align:top;width:20%;" | <div style="none: solid; border-width:2px; background: #FFFFFF; padding: 10px; font-weight:bold; text-align:center; line-height: wrap_content; text-indent: 20px; font-size:18px"><font color="#b1260e" size=5 face="Century Gothic">Interpretation and Managerial insights</font></div><br/> | ||

| + | |||

| + | |||

| + | <p>A multiple stepwise linear regression was run to explain Ln(Total Engagement) for article performance from post message sentiment score, number of links, SQRT(Number of images) and article authors. These variables statistically significantly explained Ln(Total Engagement), F(33.79, 1.06) = 31.96, p < 0.0001***, adjusted R2 = 0.17. All selected variables provided statistically significantly to the explanation, p < .05. The article regression model has met all 5 assumptions highlighted above, and we believe that our sponsor can benefit from the knowledge of the different determinants of their different social media engagement performance based on the regression equation on their article performance.</p> | ||

| + | <br><br> | ||

| + | |||

| + | <p> | ||

| + | While our article explanatory regression models can explain up to 17-18% of the variation in the post’s engagement performance, insights can still be gleaned from it. Below are the points that can be drawn for the article regression model: | ||

| + | <br><br> | ||

| + | * A positive sounding post message to accompany the article can help increase engagement. | ||

| + | * Articles that contain too many embedded links may not perform well in terms of engagement. This could suggest possibly that viewer tend not to read the article or are referred elsewhere as a result. | ||

| + | * The number of images used in an article matters and more images can help improve the engagement level of the article. This is applicable for categories that require visually appealing information | ||

| + | * Authors A, B, C, D, E, F, G, H, I, and J are performing well and can be considered suited for writing their relevant categories whereas authors K, L, M, N, O, P, Q, R, S, and T are performing poorly, suggesting the need for either improvement or adjustment of assignments. | ||

| + | |||

| + | </p> | ||

Latest revision as of 22:02, 23 April 2017

Click here to return to AY16/17 T2 Group List

| Articles | Videos | R |

|---|

Multiple Linear Regression Model What makes a good Facebook post? This section outlines the explanatory model on the article dataset from Facebook Insights supplemented with our crawled variables to form a holistic complete article dataset.

|